The core objective of a Cybersecurity programmer is to develop lightweight yet effective Cybersecurity tools. There are a number of programming languages available for this purpose. Python is one such high-level programming language that has gained a lot of buzz due to its properties like interactivity and object-oriented programming. Python is an interpreter-based language where each statement is individually processed, making the debugging process easier. However, programmers don’t count much on such Python properties. It is Python’s powerful libraries that have made the language the ultimate choice of developers. In the light of Python language, developers don’t need to write each code from scratch while working on the ethical hacking/penetration testing features of the tools. Instead, they can import the functionality by using the pre-built open-source Python libraries. Following is a brief overview of some of these Python libraries/modules that can help developers in designing the most powerful Cybersecurity tools without diving deep in the context of writing separate codes for each feature of a tool.

Scapy

Scapy is a powerful Python module that can be used for penetration testing tasks like scanning, probing, unit testing, and trace-routing. The program is designed to sniff, send, interpret, or manipulate the network packets. The basic idea behind Scapy is sending packets and getting a meaningful response. This feature of Scapy has an advantage over similar tools like Nmap where the response is mostly a simple (open/closed/filtered) status. Scapy, on the other hand, allows the developers to define the packets (requests), record the response (answer) as packet couples (requests | answers), and return the results in the form of (requests | answers) list. Many modules ignore the packets that are not answered by the target network/host. Scapy, however, returns the full information to the user by creating an extra list of unmatched (unanswered) packets. Apart from sending/receiving probing packets, Scapy can send invalid frames to the target host, inject 802.11 frames, decode WEP-encrypted VOIP packets, etc.

Scapy can provide the aforementioned features simply by importing the module using the import statement.

#! /usr/bin/env python import sys from scapy.all import *

The statement ‘’from scapy.all import *’’ in the above code tells the interpreter to import all the functionalities of Scapy module. We can also import the desired functions of Scapy by replacing the asterisk (*) symbol with the desired functions. See the following example.

#! /usr/bin/env pythonj8 import sys from scapy.all import ICMP, IP, ARP

Scapy Documentation: https://scapy.readthedocs.io/en/latest/index.html

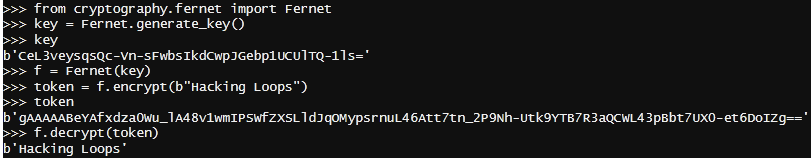

Cryptography

Many Cybersecurity development projects require cryptographic functions like data encryption, decryption, and secret keys generation. Python Cryptography library can do all these tasks since it is loaded with such cryptographic algorithms. Cryptography functions are divided into two groups (layers) i-e (a) the recipes layer and (b) the hazardous materials layer. The Cryptography library functions under the recipes layer are easy to implement. Following is a ‘recipes layer’ example that utilizes Cryptography’s Fernet function to generate a symmetric key and encrypt/decrypt a plain text (Hacking Loops).

The hazardous material layer has more advanced cryptographic primitives like authenticated encryption, asymmetric algorithms, key wrapping, and two-factor authentication.

Cryptography Documentation: https://cryptography.io/en/latest/

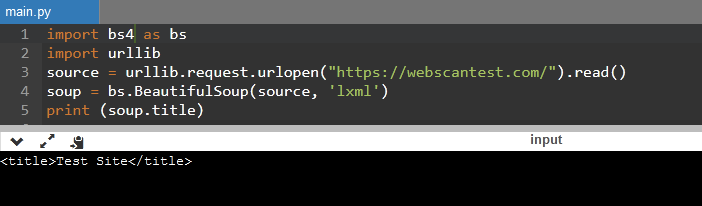

Beautiful Soup

Information gathering is an important phase of penetration testing. Sometimes, the penetration testers need to extract information from HTML/XML pages. Writing a tool from scratch or even doing the process manually can take hours or days in complex projects. Beautiful Soup is a useful Python library that can automate such data scraping tasks. The library is capable of parsing data from HTML and XML files. The following example code shows how to import the Beautiful Soup library to another project to scrap web page data.

It is important to note here that the Beautiful Soup package name has been changed to bs4 due to the new Beautiful Soup version 4. A target URL (webscantest.com) is specified in the code and passed through the (lxml) parser to extract the example (title) information. There are a number of functions available to extract the detailed information of target web pages.

Beautiful Soup Documentation: https://www.crummy.com/software/BeautifulSoup/bs4/doc/

Impacket

The ‘Impacket’ is a network-based Python library that comprises a set of classes to support all major network protocols including Ethernet, TCP, UDP, IP, ICMP, ARP, IGMP, etc. The main objective of the Impacket is to enable users to create and decode network packets with some programming privileges and help in the high-level implementation of protocols like Server Message Block (SMB) and Microsoft Remote Procedure Call Copyright (MSRPC). SMB protocols are used for file sharing in a Windows-based network whereas the MSRPC collects Windows events without installing any agents on the host. The Impacket can be used for developing security projects like remote code execution, authentication (plain/NTLM/Kerberos), SMB/MSRPC, passwords, and MITM attack tools.

Impacket Documentation: https://pypi.org/project/impacket/

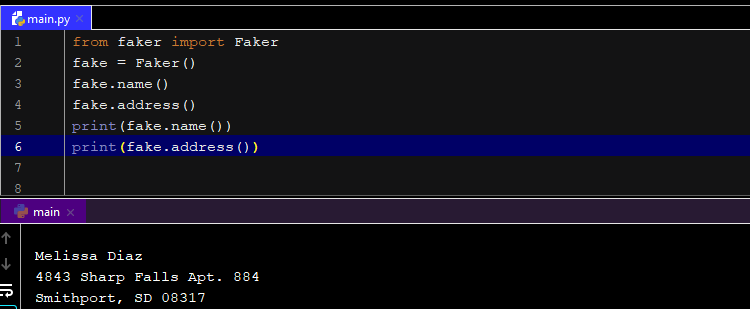

Faker

The importance of fake data can’t be ignored in Cybersecurity. It can act as a placeholder for testing as well as real Cybersecurity operations. Faker is a powerful Python library that can generate random data like names, addresses, emails, countries, text, URLs, etc. Consider the following Faker example that utilizes Faker() method to produce a random name and address.

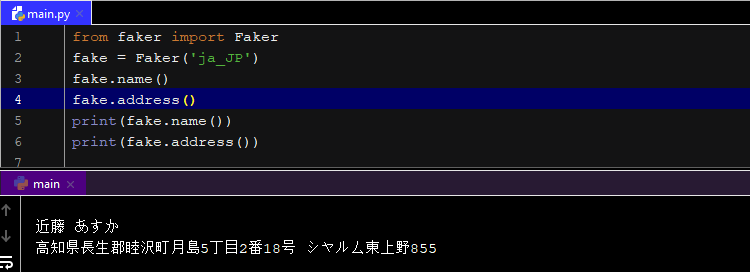

Localization is an important factor to consider while generating fake data. Sometimes, we require data from a specific region. The Faker can take locales as an argument to produce fake data from a specified region. Consider the following example where we have selected Japanese (ja_JP) locale as a function argument.

We can see the output in Japanese with random data from the same country. We can even select more than one locale to generate random data belonging to multiple locales in a single output. If no locale is selected, Faker generates results using the default ‘en_US’ (English-United States) locale.

Fake Documentation: https://faker.readthedocs.io/en/master/

Requests

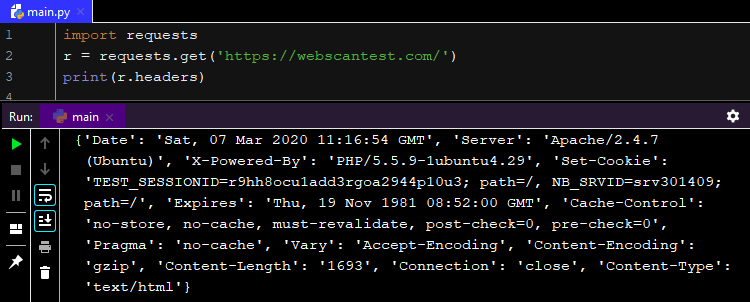

‘Requests’ library can effectively play its part in projects where communication is required with the web applications. Whenever we open a web page we make a request. That is exactly what ‘Requests’ library does. It sends HTTP/1.1 requests to web servers without requiring any manual query strings to the target URLs. The library is packed with a lot of web-requests related properties, such as HTTP(S) proxy support; Keep-alive connections, connection pooling; SSL verification, automatic decoding, decompression, plain authentication, hash authentication, chunked requests, multipart file uploads, streaming downloads, etc. The power of the ‘Requests’ library can be seen in the following screenshot where we have extracted all the header information of the target web host with two lines of code.

Requests Documentation: https://requests.readthedocs.io/en/latest/